Live Project | GitHub Repository

AddressIQ is a comprehensive property intelligence toolkit designed specifically for the Netherlands real estate market. It serves as a centralised engine that looks up addresses and enriches them with a massive array of datasets—ranging from cadastral and demographic info to environmental risks and market data.

I designed and built this system entirely from scratch to solve a specific problem: the fragmentation of Dutch property data. By aggregating disparate sources into a unified API and scoring engine, AddressIQ provides actionable insights for developers, investors, and data engineers.

🚀 Key Achievements

- Adoption: Currently serving ~100 Daily Active Users (DAU).

- Performance: Achieving a 2% conversion rate from free to premium insights.

- Solo Engineering: Built the entire stack (Frontend, Backend, DevOps, CI/CD) independently.

- Infrastructure: Moved away from managed PaaS to a custom unmanaged VPS setup using Hetzner and Coolify, significantly reducing cloud costs while increasing control.

🛠️ Technical Architecture & Design Choices

The Core: Go (Golang) Backend

I chose Go (v1.23+) for the backend primarily for its raw performance and strong typing, which is crucial when handling complex data structures from 35+ different external APIs.

The backend is structured around a Modular Monolith architecture, ensuring code maintainability without the premature complexity of microservices.

1. The Aggregator Pattern

The heart of the application is the PropertyAggregator service. It orchestrates the retrieval of data from dozens of sources. Instead of tightly coupling the API logic to the handlers, I separated the data fetching into distinct client domains (Environmental, Infrastructure, Market, etc.).

Design Insight: Data consistency is a major challenge when integrating government APIs (PDOK, CBS) with commercial ones (Matrixian, Altum). The aggregator normalises these varied response formats into a single

ComprehensivePropertyDatastruct, serving as the “source of truth” for the application.

2. The Enhanced Scoring Engine

Raw data is useless without context. I implemented a sophisticated scoring engine (backend/pkg/scoring/enhanced_scoring.go) that translates raw metrics into human-readable scores (0-100) across three pillars: ESG, Profit, and Opportunity.

Here is a glimpse into how the overall score is weighted in the engine:

// Calculate Overall Score (weighted average)

scores.OverallScore = (scores.ESGScore*0.3 + scores.ProfitScore*0.4 + scores.OpportunityScore*0.3)

// Determine Risk Level based on cumulative risk factors

scores.RiskLevel = se.calculateRiskLevel(data)The engine dynamically adjusts scores based on complex logic, such as penalising environmental scores for soil contamination or boosting opportunity scores based on zoning laws and renovation potential.

Infrastructure & DevOps (The “Hard Way”)

Instead of relying on managed services like Vercel or Heroku, I wanted to master the entire deployment pipeline.

- Host: Hetzner Cloud (CPX31 VPS running Ubuntu 22.04).

- Orchestration: Coolify (Self-hosted PaaS).

- Containerisation: Docker & Docker Compose.

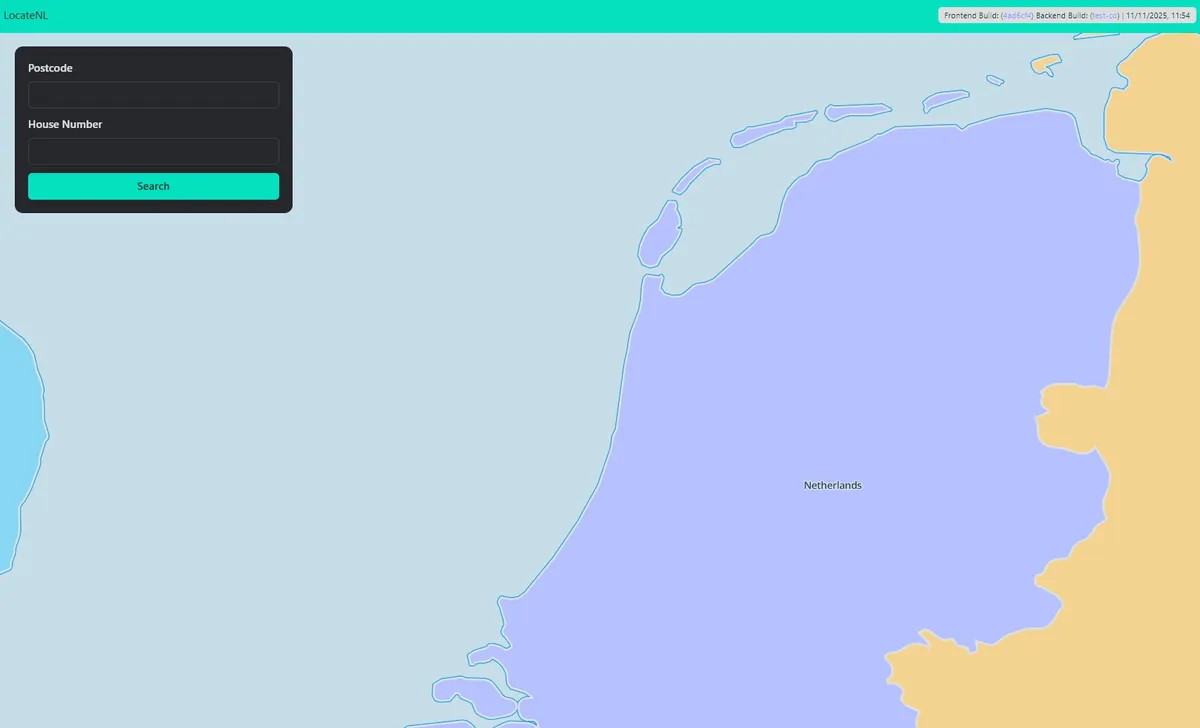

- CI/CD: I built a custom pipeline that injects Git commit hashes directly into the binary during the build process. This allows for precise version tracking in production.

Build Hash Injection: I implemented a system where the frontend and backend are aware of the exact commit they were built from, useful for debugging in production.

# From my deployment script

docker build \

--build-arg COMMIT_SHA=$SOURCE_COMMIT \

--build-arg BUILD_DATE=$(date -u +%Y-%m-%dT%H:%M:%SZ) \

-f backend/Dockerfile ./backendData Strategy & Caching

To handle the latency inherent in querying 35+ external APIs, I implemented a caching layer using Redis.

- Strategy: API responses are cached with specific TTLs (Time To Live). Static data (like soil types) is cached longer than volatile data (like traffic or weather).

- Result: This reduced average request latency significantly for repeat lookups and protects against rate limits from external providers.

🧩 Features Overview

1. Comprehensive Data Aggregation

The system integrates with key Dutch datasets, including:

- PDOK & Kadaster: For official ownership, borders, and building definitions.

- CBS (Statistics Netherlands): For hyperlocal demographic and income data.

- Environmental APIs: Fetching soil quality, flood risks, and noise pollution levels.

- Market Data: Integrating real-time traffic (NDW) and solar potential.

2. Intelligent Scoring Models

- ESG Score: Analyses energy labels, flood risk, and social livability.

- Profit Potential: Estimates rental yields, market demand, and historic price appreciation.

- Renovation Opportunity: Identifies properties with high “value-add” potential (e.g., poor energy labels in high-demand neighbourhoods).

3. Production-Grade Reliability

The application includes robust error handling and logging (logutil package). If a non-critical API (like Weather) fails, the aggregator degrades gracefully, returning the partial profile without crashing the entire request.

🧠 What I Learned

This project was my vehicle for bridging the gap between “coding” and “software engineering”.

- DevOps Mastery: Managing a Linux VPS, configuring firewalls, and setting up reverse proxies with SSL gave me a deep appreciation for the infrastructure layer often abstracted away by cloud providers.

- LLMOps & AI: I experimented with integrating AI workflows for data interpretation, learning how to sanitise inputs and manage context windows effectively for production use cases.

- Go Concurrency & Patterns: Structuring a Go application for scale, managing dependencies effectively, and writing idiomatic Go code.

AddressIQ represents not just a product, but a proof of competency in full-stack system design, from the database layer up to the user interface and the metal it runs on.